Artificial intelligence is at an inflection point. Over the past few years, enterprises have rushed to adopt large language models (LLMs), experimenting with prompts, copilots and chat interfaces. These early wins created excitement, but they also revealed a deeper truth: while AI can generate impressive outputs, it still struggles to operate reliably within the realities of an enterprise.

Large language models are inherently context-blind. They do not understand your business, your customers, your policies, or the subtle decision logic that drives outcomes. They do not remember past workflows and when context is missing, they fill the gaps with generalized assumptions. That is why so many AI pilots fail to scale. The model may work in isolation, but it cannot operate within a business system.

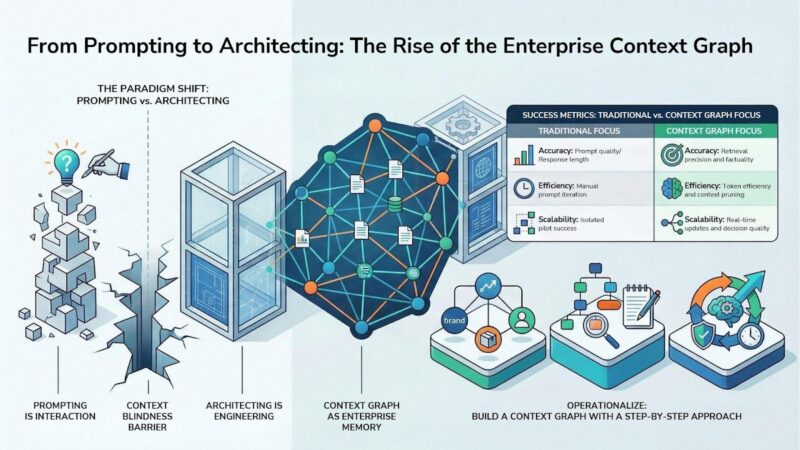

Prompting is about interacting with AI. Architecting, or what is increasingly being called context engineering, is about shaping the environment in which AI operates. It shifts the focus away from writing better prompts and toward building a system that continuously provides the right information, at the right time, in the right structure. Instead of optimizing outputs, organizations begin designing the inputs that determine those outputs.

What Is a Context Graph?

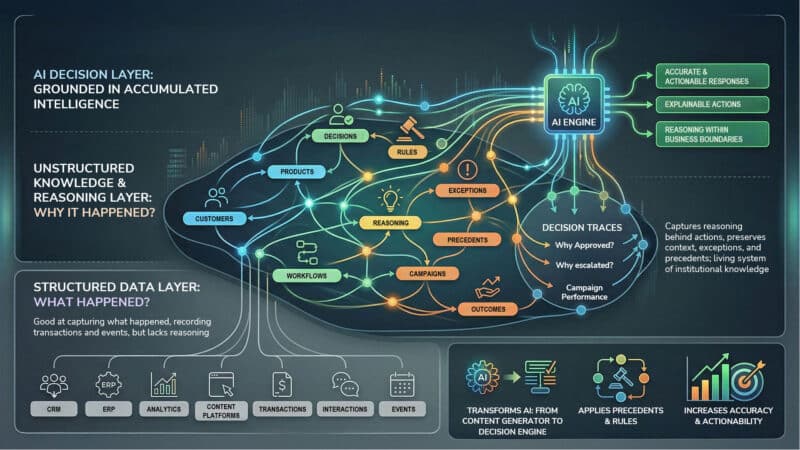

Traditional enterprise systems such as CRM, ERP, analytics and content platforms are good at capturing what happened. They record transactions, interactions and events. But they rarely capture why decisions were made. Why was an exception approved? Why was there a customer escalation? Why did one campaign outperform another? Those answers often live in Slack threads, emails, undocumented workflows, or the minds of experienced operators.

A context graph captures this missing layer. It connects entities such as customers, products, locations, content and services with relationships, decisions, rules and outcomes. More importantly, it preserves decision traces: the reasoning, context and exceptions behind actions taken across the organization. Over time, this becomes a living system of institutional knowledge that AI can use.

Context graphs help transform AI from a content generator into a decision engine. When AI is grounded in a context graph, it no longer relies only on generic training data. It operates on the accumulated intelligence of your organization. It can reason within the boundaries of your business, apply precedents and respond in a way that is more accurate, explainable and actionable.

How to Build a Context Graph: A Step-by-Step Approach

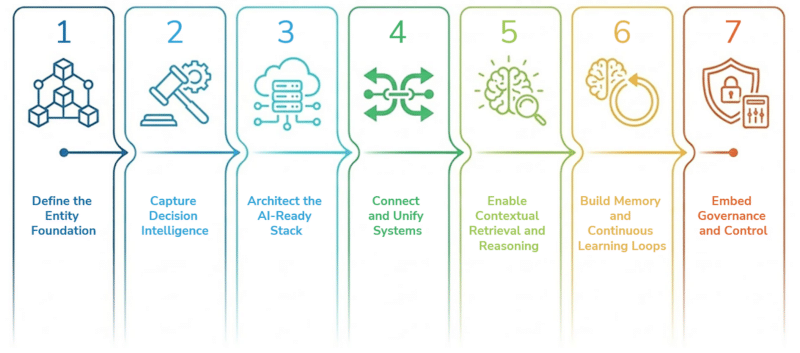

Step 1: Define the entity foundation

The first step is clarity. Start by identifying the entities that matter most to your business: brands, products, locations, customers, services, teams and key intents. Then define how these entities relate to one another.

This is the foundation, because AI cannot reason well in the face of ambiguity. If the business does not clearly define what a product is, how it differs from a service, or how a location connects to a brand, the model will make assumptions. A robust entity foundation provides AI with the structure it needs to interpret meaning accurately. As you have already written elsewhere, this is where entity strategy becomes the backbone of AI visibility and enterprise understanding. The next four steps show how to create an entity strategy.

Step 2: Capture decision intelligence

Once the entity foundation is in place, the next step is to capture how the business actually works. This means documenting not just outcomes, but also the reasoning behind them. Why was a discount approved? Why was a policy exception made? Why did support escalate a ticket? Why did one customer receive a different experience from another?

This decision layer is critical because most enterprise value lives in exceptions, judgment calls and operational nuance. Capturing these patterns turns day-to-day business behavior into structured memory. Over time, AI begins learning from real decision-making history rather than relying solely on abstract rules.

The SEO toolkit you know, plus the AI visibility data you need.

Step 3: Architect the AI-ready stack

To make the context graph usable by AI, enterprises need an architecture that combines semantic meaning with operational intelligence.

At the core sits the data layer, where the knowledge graph models key entities and relationships. Above that is the decision memory layer, which captures decisions, rationale and outcomes. The policy layer embeds business rules, compliance requirements and access controls. Then comes the agent layer, where AI systems reason, retrieve and act against the graph. Finally, the integration layer connects this architecture to enterprise systems such as CMS, CDP, PIM, CRM and workflow tools.

This architecture matters because AI does not just need data. It needs structured meaning, business logic and governed access to act responsibly.

Step 4: Connect and unify systems

With entities and decision logic defined, the next step is to connect the systems where this knowledge lives. Content platforms, customer data platforms, CRMs, PIMs, DAMs, service platforms and internal knowledge systems all hold pieces of the puzzle.

The goal is not to centralize everything into a single monolithic platform, but to enable interoperability. AI needs a cohesive layer that can access signals, relationships and records across systems without losing meaning—allowing it to operate seamlessly across the enterprise rather than within isolated silos. To support this, organizations are increasingly adopting the Model Context Protocol (MCP), which acts as a ‘USB-C for AI,’ providing a standardized and secure way for models to connect with external databases, CMS platforms and APIs without requiring custom integrations for each system.”

Step 5: Enable contextual retrieval and reasoning

Traditional retrieval approaches are not enough for enterprise AI. Pulling isolated text chunks based on similarity may help answer simple questions, but it misses the relationships between concepts. That is why organizations are moving toward graph-based retrieval and reasoning.

In this model, AI does not just retrieve a document. It understands how a customer relates to a product, how that product connects to a support issue and how that issue links to an intent signal or business rule. This relationship-aware retrieval enables deeper, multi-step reasoning and produces responses that are far more relevant and complete.

Dig Deeper: What it takes to future-proof your brand’s digital experience

Step 6: Build memory and continuous learning loops

A context graph should not be static. It should learn continuously.

Every interaction, decision, correction and outcome should feed back into the system. This creates a living memory layer that becomes richer over time. Instead of relying on humans to constantly rewrite prompts, the system evolves alongside the business. This is how organizations move from manual prompting to scalable, agentic workflows.

Real-time updates matter here. Streaming changes from systems like CMS, CRM, or commerce platforms help keep the graph current, so AI is always acting on the latest state of the business, not yesterday’s snapshot.

Step 7: Embed governance and control

Governance cannot be an afterthought. Brand rules, compliance requirements, permissions and approvals must be built directly into the architecture. If this layer is missing, AI fills the gap with generic internet knowledge or inconsistent interpretations. That is where hallucinations, brand drift and operational risk begin. When governance is encoded into the context graph, AI can operate within clear boundaries, respect access controls and represent the business accurately and consistently.

What makes a context graph effective

A useful context graph is not just connected; it is usable. It must be structured enough for AI to reason over it, current enough to reflect real-world changes and governed enough to be trusted. It should reduce ambiguity, capture institutional knowledge and improve with every interaction. In other words, it should serve as the enterprise memory and intelligence layer on which AI depends.

Success in this new model is not measured only by clicks, rankings, or prompt quality. It is measured by whether AI is more accurate, more grounded and more useful to the business.

The most important measures include retrieval precision, factuality, decision quality, latency and business outcomes. Organizations should also track whether AI is improving over time, whether it is using the right context and whether it is reducing manual effort while preserving trust and control. Token efficiency and context pruning also matter because the right architecture should make AI both smarter and more efficient.

Track, optimize, and win in Google and AI search from one platform.

Why this matters for enterprise leaders

As models become commoditized, competitive advantage will not come from access to AI alone. It will come from the quality of the context an organization provides to that AI.

The winners will not be the companies with the cleverest prompts. They will be the ones who build the richest, most structured, most continuously improving context layer. Their AI systems will be faster to adapt, better aligned to the business and harder for competitors to replicate.

That is why the Context Graph is emerging as one of the most strategic assets an enterprise can build.

Dig Deeper: Why AI visibility is now a C-suite mandate

The shift from prompting to architecting is not a minor optimization. It is a fundamental change in how enterprises operationalize AI.

The future of AI will not be defined by who has the best model. It will be defined by who owns and architects the context on which the model depends.

And that context, captured in a well-designed Context Graph, will become the intelligence layer that powers the next era of enterprise growth.

The post How to make AI work with context instead of prompts appeared first on MarTech.