Anthropic just crossed the $30 billion revenue run rate, built on companies deploying AI agents into core workflows. Eighty-two percent of those companies’ CIOs admit they cannot govern what those agents are actually doing.

That is not an AI capacity problem. That is an unpriced liability running at production speed.

What is the Shadow Ledger?

There is a financial register running in your company right now that does not appear on any dashboard. It accumulates every time an AI agent makes a commitment without codified authority, contradicts another agent’s output, or makes a decision nobody can explain when the question gets asked.

Call it the Shadow Ledger.

Three people in your organization are watching it grow. They do not know it has a name yet.

Your CFO sees the budget expanding even as AI adoption increases. Headcount on AI-augmented teams is higher than projected, not lower. The humans are correcting, apologizing, and cleaning up what the agents produced. The efficiency gain is a mirage.

Your CMO watches win rates decline in segments where the company should dominate. Exit interviews surface the same word: inconsistent. Customers describe talking to three different companies depending on which touchpoint they hit.

Your compliance lead is sitting on exposure they cannot quantify. Agents making commitments that have never been logged, reviewed, or mapped to any policy that exists in writing.

Three people. Three dashboards. One Shadow Ledger.

Where does the Shadow Ledger actually live?

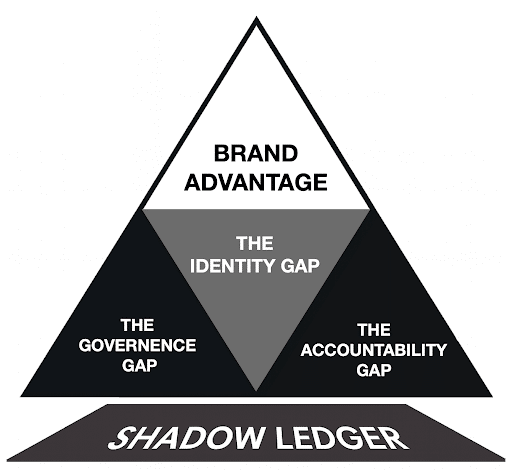

This chaos leaks through three specific architectural defects, the load-bearing nodes of the Shadow Ledger:

- The Governance Gap (Missing Regulatory Guardrails): Where financial and legal exposure accumulate because no codified rules define what agents are authorized to do.

- The Accountability Gap (Lack of Traceable Provenance): Where systemic misjudgment and oversight collapse occur because no output can be traced back to the authority that should have governed it.

- The Identity Gap (Incoherent AI Persona): Where inconsistent customer experiences erode brand trust because agents speak with different voices across every touchpoint.

Every gap at the base compounds invisibly until it surfaces as a crisis. A $200K renewal lost. A regulatory inquiry. A VP explaining to the board why three agents gave three different answers to the same customer.

Stanford’s 2025 AI Index documented 233 AI-related incidents in 2024, a 56% year-over-year increase.Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027, with poor governance cited as the primary cause.

The Shadow Ledger is not a theoretical risk. It is already on the books.

Why a transaction log is not the same as a governance record?

Most organizations that claim they can audit AI decisions are producing transaction records. A transaction record tells you what happened: which agent fired, what output it produced, when and where.

What it does not tell you is what rule authorized the decision. Those are two fundamentally different artifacts. One is a receipt. The other is a governance record.

When regulators or board members ask “why did this happen,” they are not asking for the transaction log. They are asking for the authorization chain. Most organizations do not have one because the system was never designed to produce it.

Here is the uncomfortable part. Can a CFO produce an audit log of every human decision that touched last quarter’s revenue? In most organizations, no. The agents did not create a new category of ungoverned decisions. They revealed the ones that were already happening and ran them at a speed that made the consequences impossible to ignore.

Organizations that treat this as an AI problem will keep patching tool by tool. Organizations that treat it as an operating model problem will close the ledger once and benefit from every agent they add afterward.

What architecture actually closes the Shadow Ledger?

The Shadow Ledger closes when a governance layer sits above the agent execution environment and every agent queries it before acting. What am I authorized to do here? What must I do? What am I prohibited from doing regardless of what my optimization objective says?

When that layer exists, three things change. The CFO can see where AI is creating cleanup work and fix the authority rules that caused it. The CMO can trace inconsistency to the specific agents producing it. The compliance lead can export decision records in minutes, not weeks.

Governance is not the brake. It is the rail that makes it safe to accelerate.

A Decision Gate enforces the rules. Decision Architecture informs the gate. Decision Rights are where they come from: extracted directly from your leadership’s risk appetite, judgment, and organizational intent. You cannot buy the first. You cannot skip the second. The third is what makes both of them mean something.

Previous article: Why AI agents need decision authority

The post Why AI agent adoption is creating unseen risk across the enterprise appeared first on MarTech.