AI agents are spreading quickly across organizations. They’re replacing specific components, layering on top of existing systems and introducing something fundamentally new: probabilistic decision-making inside deterministic architectures.

The question isn’t whether to use agents. It’s whether you’re deliberately architecting how they operate within your business. Without clear boundaries, complexity scales faster than value. Here’s a framework to turn that complexity into leverage.

Do you allow agents to disrupt your stack?

AI agents are popping up everywhere inside company stacks. Sales pilots an SDR agent. Support deploys a chatbot. Marketing rolls out a content copilot. Operations experiments with an agentic workflow tool. Each initiative makes sense in isolation. Together, they introduce new behavior into already complex architectures.

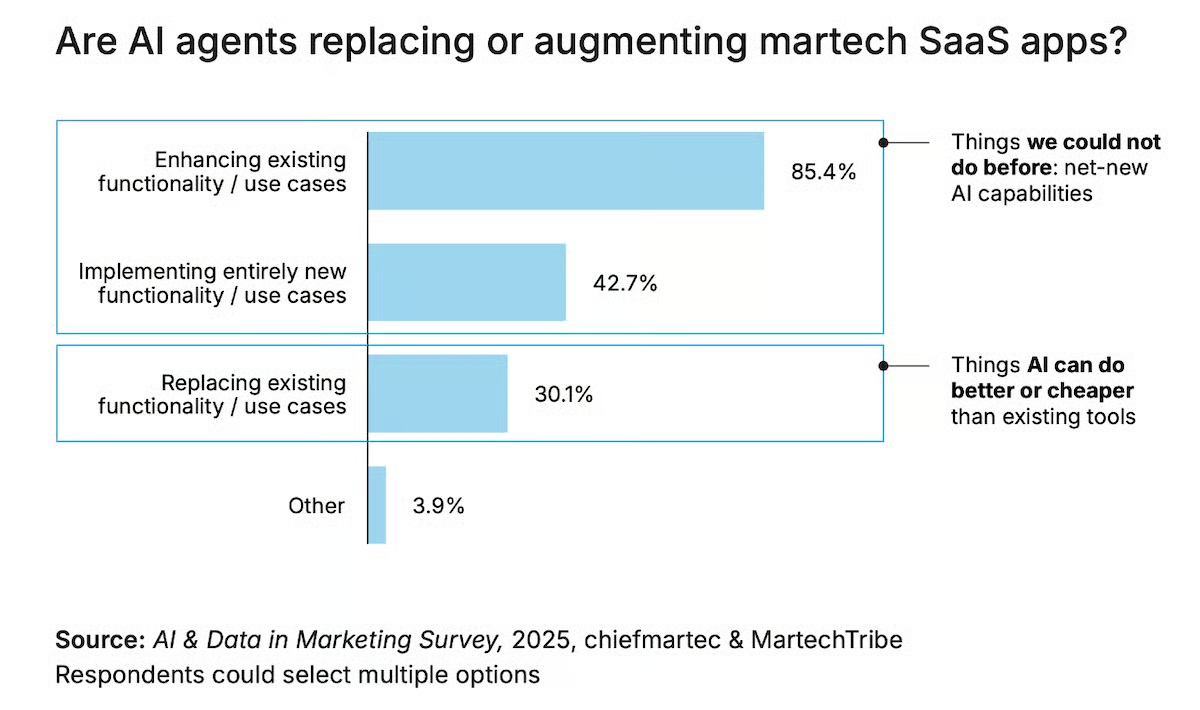

Some argue that AI will replace traditional CRM, CMS, CDP or MAP platforms, simplifying the stack in the process. Our research data shows the opposite. Only 30.1% of companies replace specific SaaS use cases with AI. Far more, 85.4%, enhance existing use cases with AI.

Adoption looks impressive on the surface. But only 23.3% of companies have agents fully in production. In May 2025, just 6.3% had AI fully integrated into the marketing stack. Teams can experiment quickly. They struggle to connect agents end-to-end across deterministic systems. The real challenge is integration.

Without a shared architectural model, each team defines good output differently. Policies live in slide decks. Guardrails vary by department. Context is fragmented across tools. The result is drift, risk and fragile automation. What companies need isn’t more pilots. They need a clear framework for how agents operate within the stack.

The SEO toolkit you know, plus the AI visibility data you need.

The architecture shift we actually need

Most organizations treat agents as if they were just add-ons. A copilot is added here, an automation agent is piloted there and they are plugged into existing workflows as if they were just another SaaS module. That approach worked when everything in the stack followed deterministic logic. It doesn’t work when decision-making itself becomes probabilistic.

Traditional systems were designed to safeguard the company’s truth. Customer data in CRM, product information in PIM, consent status, pricing logic and compliance rules. These systems define what is correct, auditable and governed. They are the foundation that keeps the business consistent across regions, brands and teams.

Agents introduce something fundamentally different. They don’t simply execute predefined logic. They interpret signals and determine what action makes sense in context. That shift means the stack now contains two types of systems: those that define truth and those that decide how to act on that truth in a specific moment.

The probabilistic block operates as a system of context on top of systems of record. The systems of record remain responsible for data integrity and policy enforcement. The agentic layer is responsible for interpreting that data and recommending or executing actions within defined boundaries.

The boundary between those roles is critical. When contextual agents begin altering customer records, product attributes or compliance logic without explicit constraints, risk increases. When deterministic foundations explicitly constrain how agents can act, scale becomes possible.

The architectural shift is clear: contextual decision-making must be deliberately designed to operate within governed company truth.

Unpacking the agentic stack framework

The framework combines deterministic SaaS and probabilistic AI into one coherent architecture. To understand it properly, it helps to walk through the layers from the bottom up.

The hyperscale layer

At the base of the stack sits commoditized capability. This is where scale, storage and performance live. It doesn’t differentiate the company, but it makes everything above it possible. The hyperscale foundation consists of three core components:

- Cloud infrastructure providers such as Google Cloud, Microsoft Azure, AWS, Scaleway, Oracle Cloud and Alibaba Cloud provide compute capacity, networking and elasticity.

- Cloud data warehouse and lakehouse platforms such as Google BigQuery, Microsoft Azure Synapse, AWS Redshift, Snowflake and Databricks centralize enterprise data and enable governance and performance at scale.

- LLM models from OpenAI, Anthropic, Mistral, Meta and Google provide general reasoning capability that can be adapted to company-specific use cases.

This layer is mostly bought. It scales power and flexibility, but competitive advantage rarely originates here. The advantage lies in how the upper layers are structured and governed.

System of record layer

Above hyperscale sits the company’s operational backbone. This is where company truth lives.

CRM, CMS, DAM, MAP, CDP, ecommerce and PIM systems manage customer and product data, enforce consent and identity resolution, apply pricing logic and embed governance and regulatory compliance. Together, they ensure that company data remains accurate, auditable and policy-aligned across regions and business units.

Most of these are long-tail commercial SaaS solutions that provide reliability and control. Agents don’t overwrite this layer. They should operate on top of it.

System of differentiation layer

Above systems of record sit the capabilities that express business strategy. This layer reflects how a company chooses to compete.

These are often hypertail, mostly built applications created with low-code or no-code platforms or custom development. Customer portals, partner portals, dealer locators, pricing calculators, product configurators and orchestration tools typically live here.

While systems of record safeguard truth, systems of differentiation make brands stand out. Together, these three layers form the deterministic foundation of the stack.

Intent model layer

On top of this deterministic base sits the probabilistic block. The probabilistic block starts with intent. This is the layer where hyperscale LLMs are trained, tuned and wrapped to behave within your company standards.

In practice, you take general-purpose models and adjust them to company standards, including:

- Brand and tone.

- Product truth.

- Approved claims.

- Legal and compliance requirements.

- Privacy constraints.

- Risk appetite.

You also package the rules and decision logic that determine what an agent is allowed to do, when it must escalate and which actions require human approval. This is also where data from systems of record is prepared for agent use, so agents act on consistent definitions of customers, products and policies instead of improvising from a fragmented context, like a brand LLM for marketing.

This layer is mainly built and doesn’t deliver end-user outcomes by itself. It makes every agent outcome safer, more consistent and more scalable.

Agent capability layer

Above the intent model layer sit AI agents that deliver common business capabilities across marketing, sales, customer support and data analysis.

These agents are typically developed and sold by third-party vendors. Commercially, they resemble SaaS products, often priced on usage, volume or outcome-based models. Organizations adopt them to accelerate specific capabilities without having to build everything themselves.

They operate probabilistically, but within the boundaries defined by the intent model layer. They act on systems of record and systems of differentiation without redefining company truth.

This layer scales shared capabilities across the organization while remaining aligned with the company’s defined intent.

Agent differentiation layer

At the top sit company-built AI agents designed around proprietary workflows, internal data and domain expertise, using low-code, AI coding tools like n8n, Lovable or Replit. These hypertail agents reflect how the brand chooses to compete and operate.

Unlike commercially available agents, these are built in-house or with strategic partners. They are tailored to company-specific logic, segmentation models, pricing strategies, go-to-market processes or retention frameworks that external vendors can’t fully replicate.

Examples might include a custom go-to-market agent aligned to internal ICP definitions, a churn-prevention agent trained on proprietary behavioral signals or a pricing intelligence agent that operates within company-defined policy constraints.

This is where differentiation emerges in the probabilistic block. When intent is clearly defined and systems of record remain stable, these agents become strategic assets rather than isolated experiments.

Turning agent sprawl into strategic leverage

AI agents are here to stay. The question is whether they operate inside a coherent architecture or alongside it.

When probabilistic systems are layered on top of deterministic foundations without clear intent and guardrails, complexity scales faster than value. When those boundaries are deliberately designed, agents amplify what already works.

The agentic stack framework turns sprawl into structure and experimentation into sustainable advantage.

The post How to architect AI agents in your martech stack appeared first on MarTech.